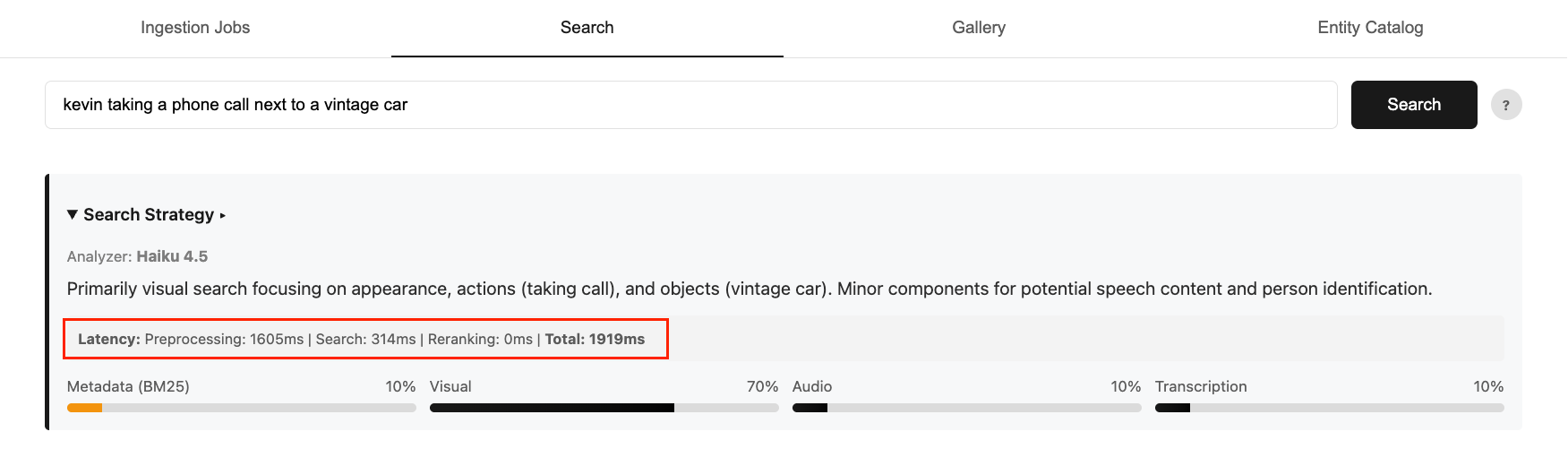

Optimizing models for video semantic search requires balancing accuracy, cost, and latency. Faster, smaller models lack routing intelligence, while larger, accurate models add significant latency overhead. In Part 1 of this series, we showed how to build a multimodal video semantic search system on AWS with intelligent intent routing using the Anthropic Claude Haiku model in Amazon Bedrock. While the Haiku model delivers strong accuracy for user search intent, it increases end-to-end search time to 2-4 seconds. This contributes to 75% of the overall latency.

Figure 1: An example end-to-end query latency breakdown

Now consider what happens as the routing logic grows more complex. Enterprise metadata can be far more complex than the five attributes in our example (title, caption, people, genre, and timestamp). Customers may factor in camera angles, mood and sentiment, licensing and rights windows, and more domain-specific taxonomies. More nuanced logic means a more demanding prompt, and a more demanding prompt leads to more expensive and slower responses. This is where model customization comes in. Rather than choosing between a model that’s fast but too simple or one that’s accurate but too expensive or too slow, we can achieve all three by training a small model to perform the task accurately at much lower latency and cost.

In this post, we show you how to use Model Distillation, a model customization technique on Amazon Bedrock, to transfer routing intelligence from a large teacher model (Amazon Nova Premier) into a much smaller student model (Amazon Nova Micro). This approach cuts inference cost by over 95% and reduces latency by 50% while maintaining the nuanced routing quality that the task demands.

Solution overview

We will walk through the full distillation pipeline end to end in a Jupyter notebook. At a high level, the notebook contains the following steps:

- Prepare training data — 10,000 synthetic labeled examples using Nova Premier and upload the dataset to Amazon Simple Storage Service (Amazon S3) in Bedrock distillation format

- Run distillation training job — Configure the job with teacher and student model identifiers and submit via Amazon Bedrock

- Deploy the distilled model — Deploy the custom model using on-demand inference for flexible, pay-per-use access

- Evaluate the distilled model — Compare routing quality against the base Nova Micro and the original Claude Haiku baseline using Amazon Bedrock Model Evaluation

The complete notebook, training data generation script, and evaluation utilities are available in the GitHub repository.

Prepare training data

One of the key reasons we chose model distillation over other customization techniques like supervised fine-tuning (SFT) is that it does not require a fully labeled dataset. With SFT, every training example needs a human-generated response as ground truth. With distillation, you only need prompts. Amazon Bedrock automatically invokes the teacher model to generate high-quality responses. It applies data synthesis and augmentation techniques behind the scenes to produce a diverse training dataset of up to 15,000 prompt-response pairs.

That said, you can optionally provide a labeled dataset if you want more control over the training signal. Each record in the JSONL file follows the bedrock-conversation-2024 schema, where the user role (the input prompt) is required, and the assistant role (the desired response) is optional. See the following examples, and reference Prepare your training datasets for distillation for more detail:

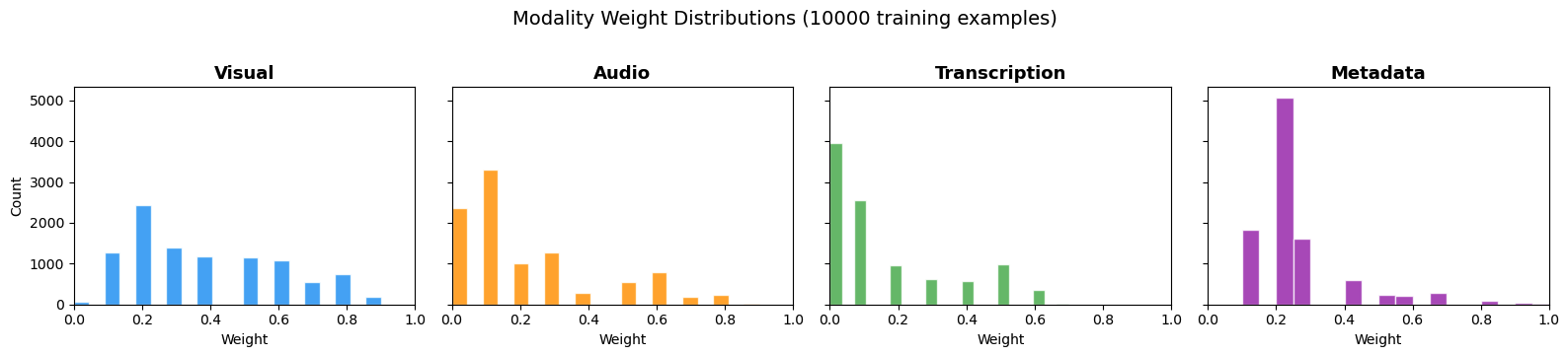

For this post, we prepared 10,000 synthetic labeled examples using Nova Premier, the largest and most capable model in the Nova family. The data was generated with a balanced distribution across visual, audio, transcription, and metadata signal queries, The examples cover the full range of expected search inputs, represent different difficulty levels, include edge cases and variations, and prevent overfitting to narrow query patterns. The following chart shows the weight distribution across the four modality channels.

Figure 2: The weight distribution across the 10,000 training examples

If you need additional examples or want to adapt the query distribution to your own content domain, the provided generate_training_data.py script can be used to synthetically generate more training data using Nova Premier.

Run distillation training job

With the training data uploaded to Amazon S3, the next step is to submit the distillation job. Model distillation works by using your prompts to first generate responses from the teacher model. It then uses those prompt-response pairs to fine-tune the student model. In this project, the teacher is Amazon Nova Premier and the student is Amazon Nova Micro, a fast, cost-efficient model optimized for high-throughput inference. The teacher’s routing decisions become the training signal that shapes the student’s behavior.

Amazon Bedrock manages the entire training orchestration and infrastructure automatically. There is no cluster provisioning, no hyperparameter tuning, and no teacher-to-student model pipeline setup required. You specify the teacher model, the student model, the S3 path to your training data, and an AWS Identity and Access Management (IAM) role with the necessary permissions. Bedrock handles the rest. The following is an example code snippet to trigger the distillation training job:

The job runs asynchronously. You can monitor progress in the Amazon Bedrock console under Foundation models > Custom models, or programmatically:

status = bedrock_client.get_model_customization_job(

jobIdentifier=job_arn)['status']

print(f"Job status: {status}") # Training, Complete, or FailedTraining time varies depending on the dataset size and the student model selected. For 10,000 labeled examples with Nova Micro, expect the job to complete within a few hours.

Deploy the distilled model

Once the distillation job is complete, the custom model is available in your Amazon Bedrock account and ready to deploy. Amazon Bedrock offers two deployment options for custom models: Provisioned Throughput for predictable, high-volume workloads, and On-Demand Inference for flexible, pay-per-use access with no upfront commitment.

For most teams getting started, on-demand inference is the recommended path. There is no endpoint to provision, no hourly commitment, and no minimum usage requirement. The following is the deployment code:

Once the status shows InService, you can invoke the distilled model exactly as you would any other base model using the standard InvokeModel or Converse API. You pay only for the tokens you consume at Nova Micro inference rates: $0.000035 per 1,000 input tokens and $0.000140 per 1,000 output tokens.

Evaluate the distilled model

Before comparing against the original router, it is worth validating that distillation improved the base model’s ability to follow the routing task. The following table shows the same prompt run through base Nova Micro and the distilled Nova Micro side by side.

| Query | Distilled Nova Micro | Base Nova Micro |

"CEO discussing quarterly earnings " |

{"visual": 0.2, "audio": 0.3, "transcription": 0.4, "metadata": 0.1, "reasoning": "The query focuses on spoken content (transcription) about earnings, but visual cues (CEO's appearance) and audio (tone/clarity) are also important..."} |

Here’s a JSON representation of the information you requested for a video search query about a CEO discussing quarterly earnings:

|

"sunset over mountains" |

{"visual": 0.8, "audio": 0.0, "transcription": 0.0, "metadata": 0.2, "reasoning": "The query focuses on a visual scene (sunset over mountains), with no audio or transcription elements. Metadata might include location or time-related tags."} |

Here’s a JSON representation for a video search query “sunset over mountains” that includes visual, audio, transcription, metadata weights (sum=1.0), and reasoning:

|

The base model struggles with both instructions and output format consistency. It produces free-text responses, incomplete JSON, and non-numeric weight values. The distilled model consistently returns well-formed JSON with four numeric weights that sum to 1.0, matching the schema required by the routing pipeline.

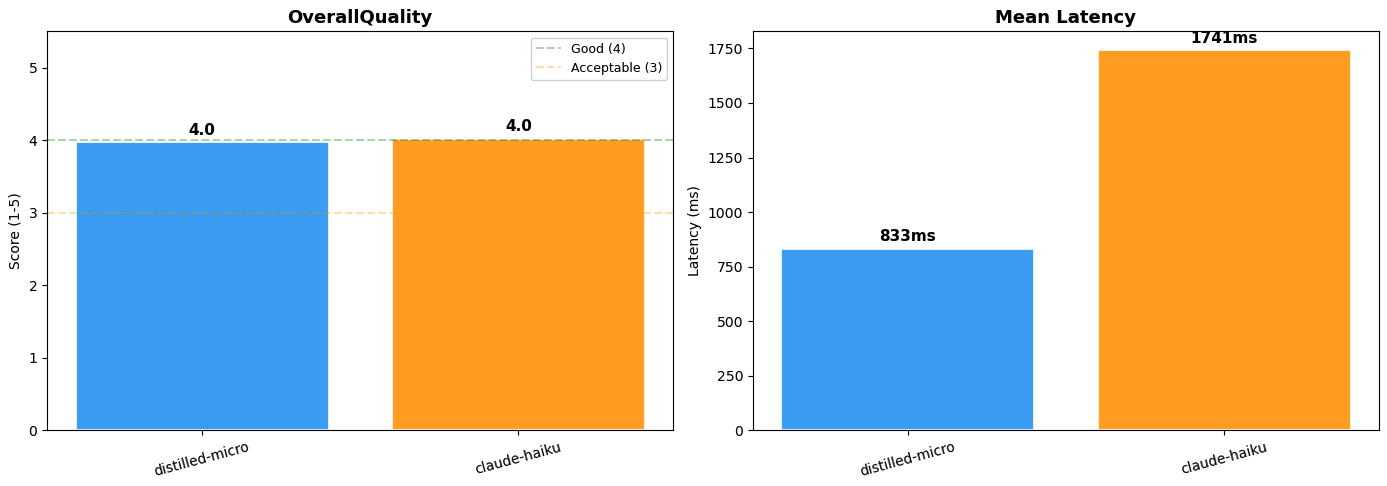

Comparing against the original Claude Haiku router, both models are evaluated against a held-out set of 100 labeled examples generated by Nova Premier. We use Amazon Bedrock Model Evaluation to run the comparison in a structured, managed workflow. To assess routing quality beyond standard metrics, we defined a custom OverallQuality rubric (see the following code block) that instructs Claude Sonnet to score each prediction on two dimensions: weight accuracy against ground truth and reasoning quality. Each dimension maps to a concrete 5-point threshold, so the rubric penalizes both numerical drift and generic boilerplate reasoning.

The distilled Nova Micro model achieved a large language model (LLM)-as-judge score of 4.0 out of 5, near-identical routing quality to Claude 4.5 Haiku at roughly half the latency (833ms vs. 1,741ms). The cost advantage is equally significant. Switching to the distilled Nova Micro model reduces inference costs by over 95% on both input and output tokens, with no upfront commitments under on-demand pricing. Note: LLM-as-judge evaluation is non-deterministic. Scores may vary slightly across runs.

Figure 3: Model performance comparison (Distilled Nova Micro vs. Claude 4.5 Haiku)

The following is a table summary of side-by-side results:

| Metric | Distilled Nova Micro | Claude 4.5 Haiku |

| LLM-as-judge Score | 4.0 / 5 | 4.0 / 5 |

| Mean Latency | 833ms | 1,741ms |

| Input Token Cost | $0.000035 / 1K | $0.80–$1.00 / 1K |

| Output Token Cost | $0.000140 / 1K | $4.00–$5.00 / 1K |

| Output Format | Consistent JSON | Inconsistent |

Clean up

To avoid ongoing charges, run the cleanup section of the notebook to remove any provisioned resources, including deployed model endpoints and any data stored in Amazon S3.

Conclusion

This post is the second part of a two-part series. Building on Part 1, this post focuses on applying model distillation to optimize the intent routing layer built in the video semantic search solution. The techniques discussed help address real production tradeoffs, such as balancing routing intelligence with latency and cost at scale while maintaining search accuracy. By distilling Amazon Nova Premier’s routing behavior into Amazon Nova Micro using Amazon Bedrock Model Distillation, we reduced inference cost by over 95% and cut preprocessing latency in half while preserving the nuanced routing quality that the task demands. If you are operating multimodal video search at scale, model distillation is a practical path to production-grade cost efficiency without sacrificing search accuracy. To explore the full implementation, visit the GitHub repository and try the solution yourself.