Run Google’s latest omni-capable open models faster on NVIDIA RTX AI PCs, from NVIDIA Jetson Orin Nano, GeForce RTX desktops to the new DGX Spark, to build personalized, always-on AI assistants like OpenClaw without paying a massive “token tax” for every action.

The landscape of modern AI is shifting rapidly. We are moving away from a total reliance on massive, generalized cloud models and entering the era of local, agentic AI powered by platforms like OpenClaw. Whether it is deploying a vision-enabled assistant on an edge device or building an always-on agent that automates complex coding workflows, the potential for generative AI on local hardware is absolutely boundless.

However, developers face a persistent bottleneck and a massive hidden financial burden: The “Token Tax.” How do you get an AI to constantly process multimodal inputs rapidly and reliably without racking up astronomical cloud computing bills for every single token generated?

The answer to eliminating API costs entirely is the new Google Gemma 4 family, and the optimal hardware platform of choice is NVIDIA GPUs.

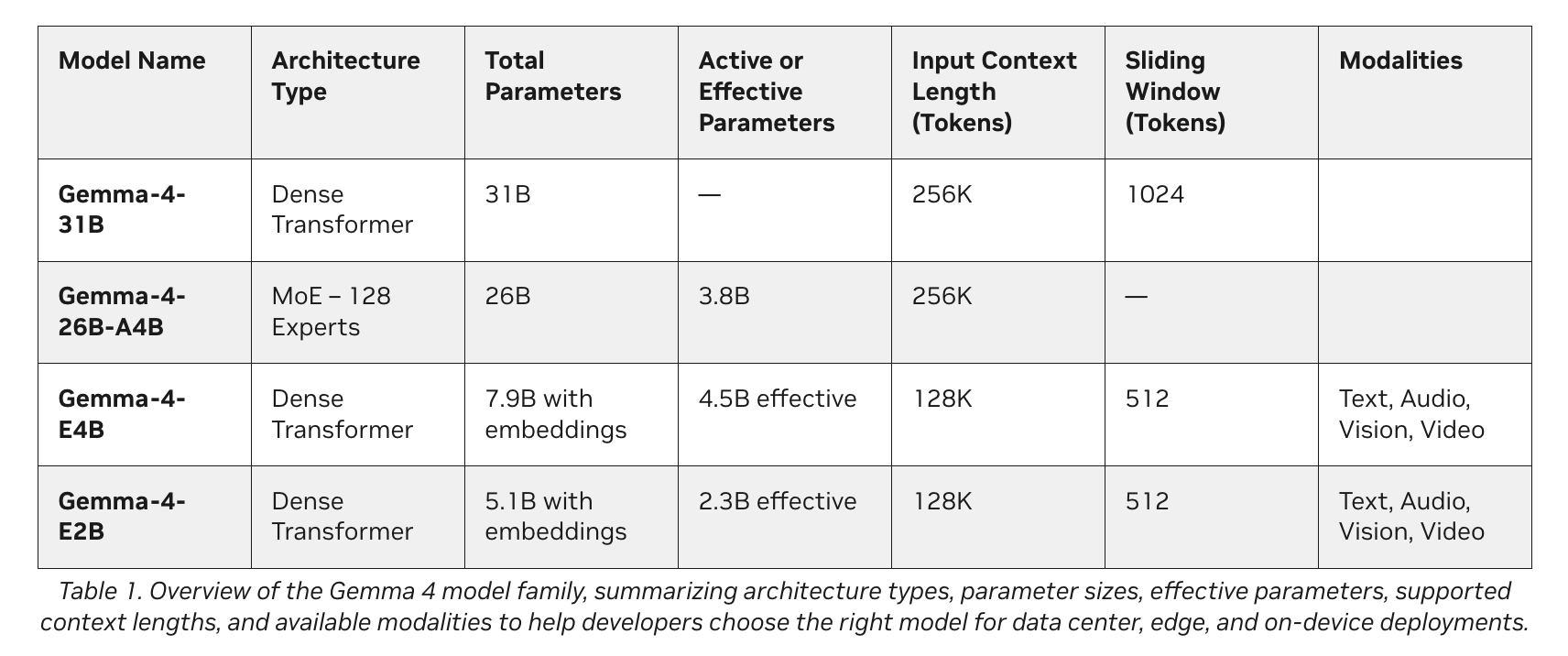

Google’s latest additions to the Gemma 4 family introduce a class of small, fast, and omni-capable models built explicitly for efficient local execution across a wide range of devices. Optimized in collaboration with NVIDIA, these models scale effortlessly from the Jetson Orin Nano edge AI modules to GeForce RTX PCs, workstations, and the DGX Spark personal AI supercomputer.

The Agentic AI Paradigm

Think of the Gemma 4 family as a high-performance engine for your local AI agents. Spanning E2B, E4B, 26B, and 31B variants, these models are designed for efficient deployment anywhere. They natively support structured tool use (function calling) for agents and offer interleaved multimodal inputs, meaning developers can mix text and images in any order within a single prompt.

Depending on your hardware and goals, developers generally utilize one of two main tiers:

1. Ultra-Efficient Edge Models (E2B and E4B)

- The Tech: Gemma 4 E2B and E4B.

- How it Works: These models are built for ultraefficient, low-latency inference at the edge. They operate completely offline with near-zero latency and zero API fees.

- Best For: IoT devices, robotics, and localized sensor networks.

- Hardware Needed: Devices including NVIDIA Jetson Orin Nano modules.

2. High-Performance Agentic Models (26B and 31B)

- The Tech: Gemma 4 26B and 31B.

- How it Works: These variants are designed specifically for high-performance reasoning and developer-centric workflows.

- Best For: Complex problem-solving, code generation, and running agentic AI.

- Hardware Needed: NVIDIA RTX GPUs, workstations, and DGX Spark systems.

The Hardware Reality: Why NVIDIA Accelerates Gemma 4

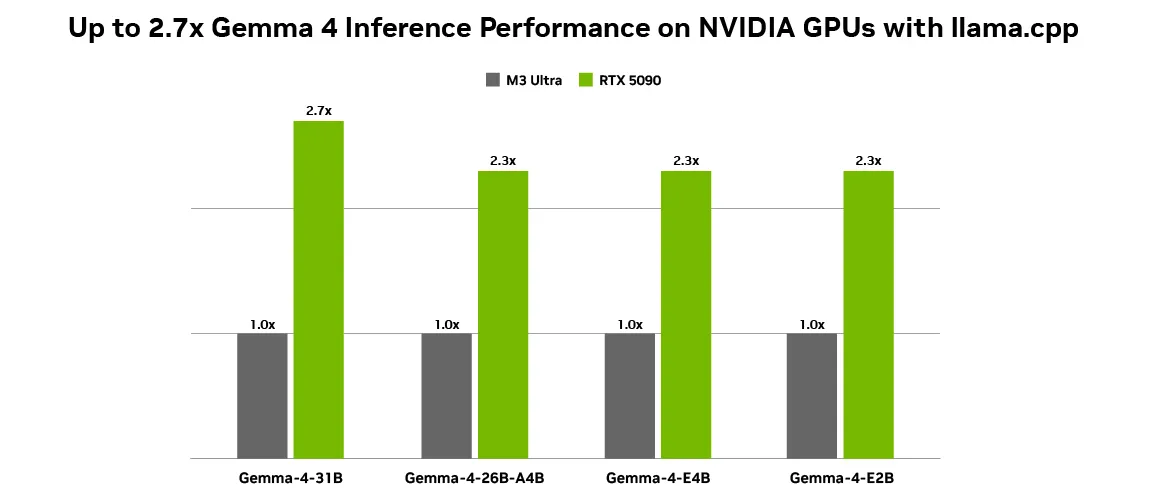

One of the most critical factors in making local AI financially viable is token generation throughput. Running open models like the Gemma 4 family on NVIDIA GPUs achieves optimal performance because NVIDIA Tensor Cores accelerate AI inference workloads, delivering higher throughput and lower latency. With up to 2.7x inference performance gains on an RTX 5090 compared to an M3 Ultra desktop using llama.cpp, local execution is smoother than ever. This incredible speed makes zero-cost local inference viable for heavy, continuous agentic workloads.

OpenClaw & The “Token Tax” Solution

Why is the combination of Gemma 4 and NVIDIA winning the local AI race? It comes down to speed and economics.

As local agentic AI gains momentum, applications like OpenClaw are enabling always-on AI assistants on RTX PCs, workstations, and DGX Spark systems. The latest Gemma 4 models are fully compatible with OpenClaw, allowing users to build capable local agents that continuously draw context from personal files, applications, and workflows to automate daily tasks.

For an always-on assistant like OpenClaw, running fast and locally isn’t just a technical preference; it is an economic necessity. If you were to use a cloud API to read every personal file, analyze screen context, and process thousands of automated actions an hour, the resulting “Token Tax” would be astronomical. Paying a cloud provider for every single token generated by a constantly active background agent is financially unsustainable. By running Gemma 4 locally on an NVIDIA GPU, users eliminate these API token costs entirely. You get infinite, lightning-fast, zero-latency inference that makes an always-on AI feel like a native, cost-free extension of your operating system.

Making It Secure: Meet NeMoClaw

While OpenClaw is a fantastic operating system for personal AI, enterprise and privacy-conscious users require stricter boundaries. To make these setups secure, developers can use NVIDIA NeMoClaw. NeMoClaw is an open-source stack that adds essential privacy and security controls to OpenClaw. With a single command, anyone can run always-on, self-evolving agents safely. Using the NVIDIA Agent Toolkit and OpenShell, NeMoClaw enforces policy-based guardrails, giving users total control over how their agents handle sensitive data. This pairs perfectly with local Nemotron or Gemma models to keep data completely offline, avoiding both cloud data leaks and cloud API token charges.

Use Case Study 1: The “Always-On” Developer Assistant

- The Goal: Run an always-on coding assistant that constantly monitors a developer’s workflow to suggest code optimizations, debug errors in real-time, and automate developer workflows.

- The Problem: Using cloud models for this creates a crippling token tax, as the assistant continuously reads hundreds of lines of code every minute. Additionally, uploading proprietary codebase snippets to the cloud creates security and IP risks.

- The Solution: Running Gemma 4 (31B variant) paired with OpenClaw locally on an NVIDIA GeForce RTX 5090 desktop.

- The Result: The developer receives instant, zero-latency code generation and debugging. Because it runs locally, thousands of dollars in potential API token costs are completely eliminated, and proprietary code never leaves the workstation.

Use Case Study 2: The Edge Vision Agent

- The Goal: Deploy smart security cameras in a remote warehouse capable of tracking inventory and identifying hazards in real-time using document and video intelligence.

- The Problem: Streaming 24/7 video feeds to a cloud vision model incurs an astronomical token tax and requires massive bandwidth. Standard local models are too large to fit on edge devices.

- The Solution: Deploying the Gemma 4 E2B model on an NVIDIA Jetson Orin Nano edge AI module. The model utilizes its rich vision and video capabilities to process interleaved multimodal inputs seamlessly on-device.

- The Outcome: The system achieves ultraefficient, low-latency inference completely offline. It recognizes objects and analyzes video continuously 24/7 without generating a single cent in API token fees.

Use Case Study 3: The Secure Financial Agent

- The Goal: Create a personal assistant that automates tax preparation and reviews sensitive banking documents across 35+ languages.

- The Problem: Financial records cannot be exposed to cloud models due to severe privacy regulations, and processing hundreds of pages of text generates a high token tax.

- The Solution: The user utilizes NeMoClaw on an NVIDIA DGX Spark to wrap the always-on agent in strict, policy-based privacy guardrails. The agent uses the Gemma 4 26B model for its strong performance on complex problem-solving and reasoning tasks.

- The Result: A highly secure, capable agent that draws context from personal financial files safely. NeMoClaw ensures the agent strictly adheres to privacy rules, keeping all banking data offline, fast, protected, and free from cloud processing fees.

Ready to Start?

NVIDIA, Google, and the open-source community have provided comprehensive tools to get you running and saving on API costs immediately.

- For Desktop Users: NVIDIA has collaborated with Ollama and llama.cpp to provide the best local deployment experience. Download Ollama to run Gemma 4 natively, or install llama.cpp paired with the Gemma 4 GGUF Hugging Face checkpoint.

- For Always-On Agents: Learn how to run OpenClaw for free on RTX GPUs and DGX Spark or by using the DGX Spark OpenClaw playbook.

Check out the Google DeepMind announcement blog and the NVIDIA technical blog for more details on how to get started with Gemma 4 on NVIDIA GPUs.

Note:Thanks to the NVIDIA AI team for the thought leadership/ Resources for this article. NVIDIA AI team has supported this content/article for promotion.

The post Defeating the ‘Token Tax’: How Google Gemma 4, NVIDIA, and OpenClaw are Revolutionizing Local Agentic AI: From RTX Desktops to DGX Spark appeared first on MarkTechPost.