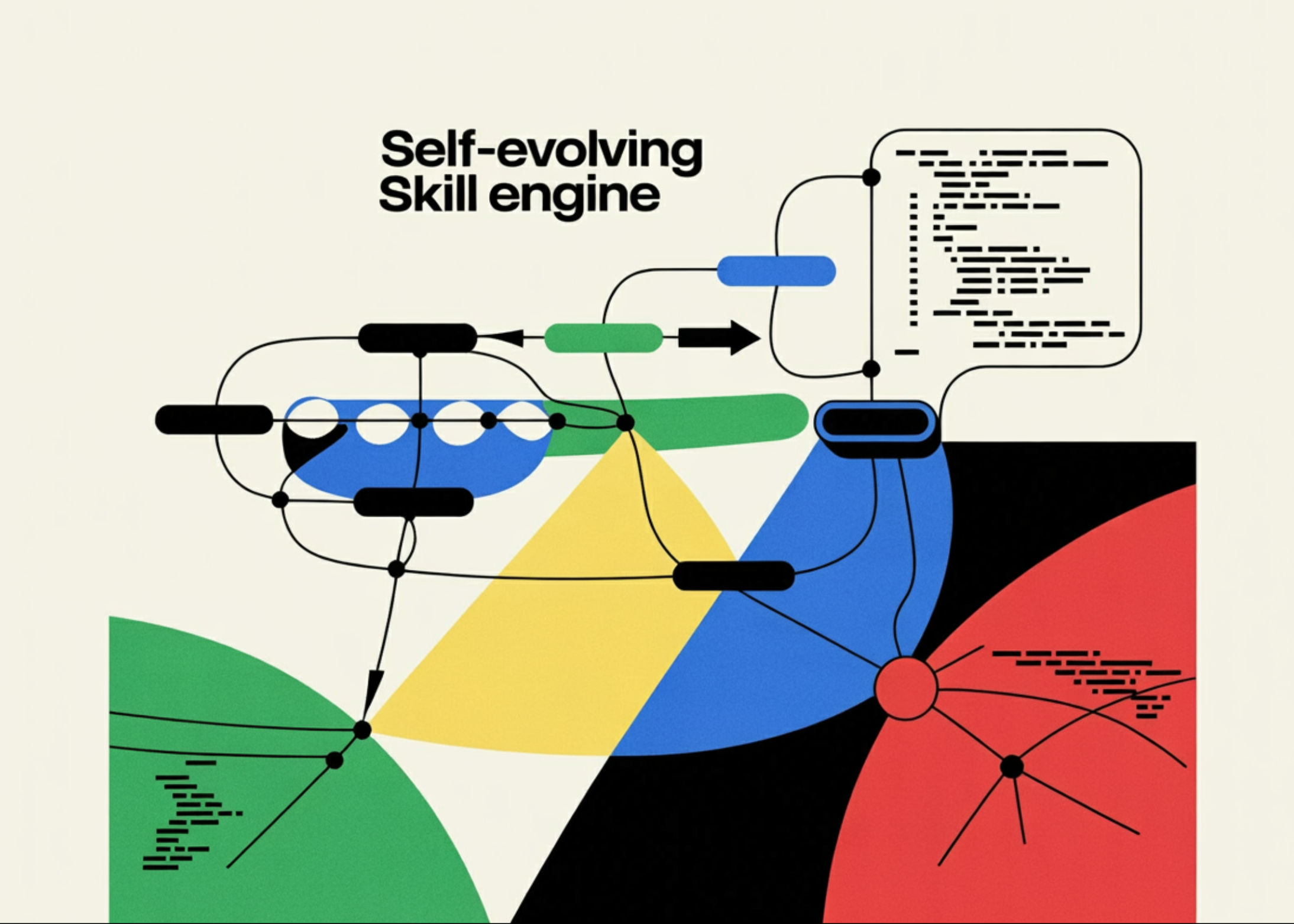

In this tutorial, we explore OpenSpace, a self-evolving skill engine developed by HKUDS that makes AI agents smarter, more cost-efficient, and capable of learning from every task they perform. We walk through the complete lifecycle of OpenSpace: from installing and configuring an OpenAI model, to executing cold-start tasks where no prior skills exist, watching the evolution engine capture reusable patterns, and then re-running similar tasks to observe real token savings through skill reuse. Along the way, we create custom skills manually, inspect the SQLite skill database, run multi-task pipelines that accumulate intelligence over time, and demonstrate how the cloud community at open-space.cloud enables agents to share evolved skills. By the end, we have a hands-on understanding of the three evolution modes (FIX, DERIVED, and CAPTURED), the three automatic triggers that keep skills healthy, and the measurable economic impact that OpenSpace delivers, including the 4.2x income improvement and 46% token reduction demonstrated in the GDPVal benchmark across 50 real-world professional tasks.

import subprocess, sys, os

print(" Installing OpenSpace from GitHub (this may take 2-3 minutes)...")

subprocess.check_call([

sys.executable, "-m", "pip", "install", "-q",

"git+https://github.com/HKUDS/OpenSpace.git"

])

subprocess.check_call([

sys.executable, "-m", "pip", "install", "-q", "openai"

])

print("n

Installing OpenSpace from GitHub (this may take 2-3 minutes)...")

subprocess.check_call([

sys.executable, "-m", "pip", "install", "-q",

"git+https://github.com/HKUDS/OpenSpace.git"

])

subprocess.check_call([

sys.executable, "-m", "pip", "install", "-q", "openai"

])

print("n Installation complete!")

try:

from openspace import OpenSpace

print("

Installation complete!")

try:

from openspace import OpenSpace

print(" OpenSpace imported successfully")

except ImportError as e:

print(f"

OpenSpace imported successfully")

except ImportError as e:

print(f" Import issue: {e}")

print("Trying alternative import path...")

import openspace

print(f"

Import issue: {e}")

print("Trying alternative import path...")

import openspace

print(f" openspace package found at: {openspace.__file__}")

import getpass

print("Enter your OpenAI API key (input is hidden):")

api_key = getpass.getpass("OpenAI API Key: ")

os.environ["OPENAI_API_KEY"] = api_key

print("n[Optional] Enter your OpenSpace Cloud API key")

print("(Get one free at https://open-space.cloud — press Enter to skip):")

cloud_key = getpass.getpass("OpenSpace Cloud Key: ")

if cloud_key.strip():

os.environ["OPENSPACE_API_KEY"] = cloud_key.strip()

print("

openspace package found at: {openspace.__file__}")

import getpass

print("Enter your OpenAI API key (input is hidden):")

api_key = getpass.getpass("OpenAI API Key: ")

os.environ["OPENAI_API_KEY"] = api_key

print("n[Optional] Enter your OpenSpace Cloud API key")

print("(Get one free at https://open-space.cloud — press Enter to skip):")

cloud_key = getpass.getpass("OpenSpace Cloud Key: ")

if cloud_key.strip():

os.environ["OPENSPACE_API_KEY"] = cloud_key.strip()

print(" Cloud API key set")

else:

print("

Cloud API key set")

else:

print(" Skipping cloud features (local mode only)")

MODEL_NAME = "openai/gpt-4o-mini"

os.environ["OPENSPACE_MODEL"] = MODEL_NAME

print(f"n

Skipping cloud features (local mode only)")

MODEL_NAME = "openai/gpt-4o-mini"

os.environ["OPENSPACE_MODEL"] = MODEL_NAME

print(f"n Configuration complete!")

print(f" Model: {MODEL_NAME}")

print(f" OpenAI Key: {'*' * 8}...{api_key[-4:]}")

print(f" Cloud: {'Enabled' if cloud_key.strip() else 'Disabled (local only)'}")

from openai import OpenAI

client = OpenAI(api_key=os.environ["OPENAI_API_KEY"])

try:

test_resp = client.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": "Say 'OpenSpace ready!' in 3 words or less."}],

max_tokens=10

)

print(f"

Configuration complete!")

print(f" Model: {MODEL_NAME}")

print(f" OpenAI Key: {'*' * 8}...{api_key[-4:]}")

print(f" Cloud: {'Enabled' if cloud_key.strip() else 'Disabled (local only)'}")

from openai import OpenAI

client = OpenAI(api_key=os.environ["OPENAI_API_KEY"])

try:

test_resp = client.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": "Say 'OpenSpace ready!' in 3 words or less."}],

max_tokens=10

)

print(f" OpenAI API working: {test_resp.choices[0].message.content}")

except Exception as e:

print(f"

OpenAI API working: {test_resp.choices[0].message.content}")

except Exception as e:

print(f" OpenAI API error: {e}")

print("Please check your API key and try again.")

OpenAI API error: {e}")

print("Please check your API key and try again.")We begin by installing OpenSpace directly from its GitHub repository along with the OpenAI SDK, ensuring all dependencies, including LiteLLM, the skill engine, and the MCP server, are pulled in automatically. We then securely input our OpenAI API key through the terminal using getpass so it never appears in plain text, and optionally provide an OpenSpace Cloud key for community features. We verify that everything works by making a quick test call to the OpenAI API and confirming that our environment is fully configured and ready for the tutorial.

import os

import json

import shutil

import sqlite3

import glob

import asyncio

import time

from pathlib import Path

WORKSPACE = Path("/content/openspace_tutorial")

SKILLS_DIR = WORKSPACE / "skills"

OUTPUT_DIR = WORKSPACE / "outputs"

DB_DIR = WORKSPACE / ".openspace"

if WORKSPACE.exists():

shutil.rmtree(WORKSPACE)

WORKSPACE.mkdir(parents=True)

SKILLS_DIR.mkdir(parents=True)

OUTPUT_DIR.mkdir(parents=True)

DB_DIR.mkdir(parents=True)

os.environ["OPENSPACE_WORKSPACE"] = str(WORKSPACE)

os.environ["OPENSPACE_HOST_SKILL_DIRS"] = str(SKILLS_DIR)

env_content = f"""OPENAI_API_KEY={os.environ['OPENAI_API_KEY']}

OPENSPACE_MODEL={MODEL_NAME}

OPENSPACE_WORKSPACE={WORKSPACE}

"""

env_path = WORKSPACE / ".env"

env_path.write_text(env_content)

print(f" Workspace: {WORKSPACE}")

print(f"

Workspace: {WORKSPACE}")

print(f" Skills: {SKILLS_DIR}")

print(f"

Skills: {SKILLS_DIR}")

print(f" Outputs: {OUTPUT_DIR}")

print(f"

Outputs: {OUTPUT_DIR}")

print(f" Database: {DB_DIR}")

print("n

Database: {DB_DIR}")

print("n Workspace ready for cold start execution!")

async def run_cold_start_task():

print("="*60)

print("

Workspace ready for cold start execution!")

async def run_cold_start_task():

print("="*60)

print(" COLD START: No skills exist yet")

print("="*60)

task = (

"Create a Python script that analyzes a CSV file containing "

"sales data with columns: date, product, quantity, price. "

"The script should compute monthly revenue, identify the top "

"3 best-selling products, and generate a summary report as "

"a formatted text file."

)

print(f"n

COLD START: No skills exist yet")

print("="*60)

task = (

"Create a Python script that analyzes a CSV file containing "

"sales data with columns: date, product, quantity, price. "

"The script should compute monthly revenue, identify the top "

"3 best-selling products, and generate a summary report as "

"a formatted text file."

)

print(f"n Task: {task[:100]}...n")

start_time = time.time()

try:

from openspace import OpenSpace

async with OpenSpace() as cs:

result = await cs.execute(task)

elapsed = time.time() - start_time

print(f"n

Task: {task[:100]}...n")

start_time = time.time()

try:

from openspace import OpenSpace

async with OpenSpace() as cs:

result = await cs.execute(task)

elapsed = time.time() - start_time

print(f"n Execution time: {elapsed:.1f}s")

print(f"n

Execution time: {elapsed:.1f}s")

print(f"n Response (first 500 chars):")

print("-" * 40)

response_text = result.get("response", str(result))

print(response_text[:500])

evolved = result.get("evolved_skills", [])

if evolved:

print(f"n

Response (first 500 chars):")

print("-" * 40)

response_text = result.get("response", str(result))

print(response_text[:500])

evolved = result.get("evolved_skills", [])

if evolved:

print(f"n Skills Evolved: {len(evolved)}")

for skill in evolved:

origin = skill.get('origin', 'unknown')

name = skill.get('name', 'unnamed')

print(f" • {name} (origin: {origin})")

else:

print("n

Skills Evolved: {len(evolved)}")

for skill in evolved:

origin = skill.get('origin', 'unknown')

name = skill.get('name', 'unnamed')

print(f" • {name} (origin: {origin})")

else:

print("n No skills evolved yet (may happen post-analysis)")

return result

except Exception as e:

print(f"n

No skills evolved yet (may happen post-analysis)")

return result

except Exception as e:

print(f"n Execution error: {type(e).__name__}: {e}")

print("nThis is expected if OpenSpace requires additional setup.")

print("We'll demonstrate the concepts with direct API calls below.")

return None

cold_start_result = await run_cold_start_task()

def inspect_skill_database():

db_patterns = [

str(WORKSPACE / ".openspace" / "*.db"),

str(WORKSPACE / "*.db"),

str(WORKSPACE / ".openspace" / "openspace.db"),

"/content/.openspace/*.db",

]

db_files = []

for pattern in db_patterns:

db_files.extend(glob.glob(pattern))

if not db_files:

print("

Execution error: {type(e).__name__}: {e}")

print("nThis is expected if OpenSpace requires additional setup.")

print("We'll demonstrate the concepts with direct API calls below.")

return None

cold_start_result = await run_cold_start_task()

def inspect_skill_database():

db_patterns = [

str(WORKSPACE / ".openspace" / "*.db"),

str(WORKSPACE / "*.db"),

str(WORKSPACE / ".openspace" / "openspace.db"),

"/content/.openspace/*.db",

]

db_files = []

for pattern in db_patterns:

db_files.extend(glob.glob(pattern))

if not db_files:

print(" No skill database found yet.")

print(" This is normal if the cold start task hasn't completed")

print(" or if OpenSpace stores skills elsewhere.")

print("n We'll create a demonstration database below.")

return None

db_path = db_files[0]

print(f"

No skill database found yet.")

print(" This is normal if the cold start task hasn't completed")

print(" or if OpenSpace stores skills elsewhere.")

print("n We'll create a demonstration database below.")

return None

db_path = db_files[0]

print(f" Found database: {db_path}")

conn = sqlite3.connect(db_path)

cursor = conn.cursor()

cursor.execute("SELECT name FROM sqlite_master WHERE type='table'")

tables = cursor.fetchall()

print(f"n

Found database: {db_path}")

conn = sqlite3.connect(db_path)

cursor = conn.cursor()

cursor.execute("SELECT name FROM sqlite_master WHERE type='table'")

tables = cursor.fetchall()

print(f"n Tables: {[t[0] for t in tables]}")

for table in tables:

table_name = table[0]

cursor.execute(f"SELECT COUNT(*) FROM {table_name}")

count = cursor.fetchone()[0]

print(f" {table_name}: {count} records")

if count > 0:

cursor.execute(f"PRAGMA table_info({table_name})")

columns = [col[1] for col in cursor.fetchall()]

print(f" Columns: {columns[:8]}{'...' if len(columns) > 8 else ''}")

cursor.execute(f"SELECT * FROM {table_name} LIMIT 3")

rows = cursor.fetchall()

for row in rows:

print(f" → {str(row)[:120]}...")

conn.close()

return db_path

db_path = inspect_skill_database()

def inspect_skill_files():

skill_files = []

search_dirs = [SKILLS_DIR, WORKSPACE / ".openspace", WORKSPACE]

for search_dir in search_dirs:

for root, dirs, files in os.walk(search_dir):

for f in files:

if f.upper() == "SKILL.MD" or f.endswith(".py"):

full_path = os.path.join(root, f)

skill_files.append(full_path)

if not skill_files:

print("

Tables: {[t[0] for t in tables]}")

for table in tables:

table_name = table[0]

cursor.execute(f"SELECT COUNT(*) FROM {table_name}")

count = cursor.fetchone()[0]

print(f" {table_name}: {count} records")

if count > 0:

cursor.execute(f"PRAGMA table_info({table_name})")

columns = [col[1] for col in cursor.fetchall()]

print(f" Columns: {columns[:8]}{'...' if len(columns) > 8 else ''}")

cursor.execute(f"SELECT * FROM {table_name} LIMIT 3")

rows = cursor.fetchall()

for row in rows:

print(f" → {str(row)[:120]}...")

conn.close()

return db_path

db_path = inspect_skill_database()

def inspect_skill_files():

skill_files = []

search_dirs = [SKILLS_DIR, WORKSPACE / ".openspace", WORKSPACE]

for search_dir in search_dirs:

for root, dirs, files in os.walk(search_dir):

for f in files:

if f.upper() == "SKILL.MD" or f.endswith(".py"):

full_path = os.path.join(root, f)

skill_files.append(full_path)

if not skill_files:

print(" No skill files found on disk yet.")

print(" Skills are created after the evolution engine runs.")

return

print(f"

No skill files found on disk yet.")

print(" Skills are created after the evolution engine runs.")

return

print(f" Found {len(skill_files)} skill-related files:n")

for sf in skill_files[:20]:

rel_path = os.path.relpath(sf, WORKSPACE)

size = os.path.getsize(sf)

print(f"

Found {len(skill_files)} skill-related files:n")

for sf in skill_files[:20]:

rel_path = os.path.relpath(sf, WORKSPACE)

size = os.path.getsize(sf)

print(f"  {rel_path} ({size} bytes)")

if sf.endswith(".md"):

with open(sf, 'r') as fh:

content = fh.read(300)

print(f" Preview: {content[:150]}...n")

inspect_skill_files()

{rel_path} ({size} bytes)")

if sf.endswith(".md"):

with open(sf, 'r') as fh:

content = fh.read(300)

print(f" Preview: {content[:150]}...n")

inspect_skill_files()We set up a clean workspace with dedicated directories for skills, outputs, and the OpenSpace database, and write a .env file so OpenSpace knows where to find our credentials and model configuration. We then execute our first task in cold-start mode, a CSV sales data analyzer, where no prior skills exist, and we observe how the evolution engine processes the execution to capture reusable patterns. We finish by inspecting both the SQLite skill database and the on-disk SKILL.md files to see exactly what OpenSpace has stored and how it structures evolved skill metadata.

async def run_warm_start_task():

print("="*60)

print(" WARM START: Reusing previously evolved skills")

print("="*60)

task = (

"Create a Python script that analyzes a CSV file containing "

"inventory data with columns: date, item, quantity, cost. "

"The script should compute monthly expenditures, identify the top "

"5 most purchased items, and output a formatted summary report."

)

print(f"n

WARM START: Reusing previously evolved skills")

print("="*60)

task = (

"Create a Python script that analyzes a CSV file containing "

"inventory data with columns: date, item, quantity, cost. "

"The script should compute monthly expenditures, identify the top "

"5 most purchased items, and output a formatted summary report."

)

print(f"n Task: {task[:100]}...")

print(" (Similar to cold start task — skills should be reused)n")

start_time = time.time()

try:

from openspace import OpenSpace

async with OpenSpace() as cs:

result = await cs.execute(task)

elapsed = time.time() - start_time

print(f"n

Task: {task[:100]}...")

print(" (Similar to cold start task — skills should be reused)n")

start_time = time.time()

try:

from openspace import OpenSpace

async with OpenSpace() as cs:

result = await cs.execute(task)

elapsed = time.time() - start_time

print(f"n Execution time: {elapsed:.1f}s")

response_text = result.get("response", str(result))

print(f"n

Execution time: {elapsed:.1f}s")

response_text = result.get("response", str(result))

print(f"n Response (first 500 chars):")

print("-" * 40)

print(response_text[:500])

evolved = result.get("evolved_skills", [])

reused = result.get("reused_skills", [])

if reused:

print(f"n

Response (first 500 chars):")

print("-" * 40)

print(response_text[:500])

evolved = result.get("evolved_skills", [])

reused = result.get("reused_skills", [])

if reused:

print(f"n Skills Reused: {len(reused)}")

for skill in reused:

print(f" • {skill.get('name', 'unnamed')}")

if evolved:

print(f"n

Skills Reused: {len(reused)}")

for skill in reused:

print(f" • {skill.get('name', 'unnamed')}")

if evolved:

print(f"n New Skills Evolved: {len(evolved)}")

for skill in evolved:

print(f" • {skill.get('name', 'unnamed')} ({skill.get('origin', '')})")

return result

except Exception as e:

print(f"n

New Skills Evolved: {len(evolved)}")

for skill in evolved:

print(f" • {skill.get('name', 'unnamed')} ({skill.get('origin', '')})")

return result

except Exception as e:

print(f"n Execution error: {type(e).__name__}: {e}")

print("We'll simulate the comparison below.")

return None

warm_start_result = await run_warm_start_task()

async def demo_skill_search():

print("="*60)

print("

Execution error: {type(e).__name__}: {e}")

print("We'll simulate the comparison below.")

return None

warm_start_result = await run_warm_start_task()

async def demo_skill_search():

print("="*60)

print(" SKILL SEARCH & DISCOVERY")

print("="*60)

try:

from openspace import OpenSpace

async with OpenSpace() as cs:

queries = [

"CSV data analysis with pandas",

"PDF report generation",

"web scraping with error handling",

]

for query in queries:

print(f"n

SKILL SEARCH & DISCOVERY")

print("="*60)

try:

from openspace import OpenSpace

async with OpenSpace() as cs:

queries = [

"CSV data analysis with pandas",

"PDF report generation",

"web scraping with error handling",

]

for query in queries:

print(f"n Query: '{query}'")

if hasattr(cs, 'skill_engine') and cs.skill_engine:

results = await cs.skill_engine.search(query)

if results:

for r in results[:3]:

print(f"

Query: '{query}'")

if hasattr(cs, 'skill_engine') and cs.skill_engine:

results = await cs.skill_engine.search(query)

if results:

for r in results[:3]:

print(f"  {r.get('name', 'unnamed')} "

f"(score: {r.get('score', 'N/A')})")

else:

print(" (no matching skills found)")

else:

print(" (skill engine not initialized — "

"skills accumulate after task executions)")

except Exception as e:

print(f"n

{r.get('name', 'unnamed')} "

f"(score: {r.get('score', 'N/A')})")

else:

print(" (no matching skills found)")

else:

print(" (skill engine not initialized — "

"skills accumulate after task executions)")

except Exception as e:

print(f"n Search demo: {e}")

print("n

Search demo: {e}")

print("n Skill search becomes available after skills are evolved.")

print(" In production, run several tasks first to build up the skill database.")

await demo_skill_search()

def create_custom_skill(skill_name, description, instructions, triggers):

skill_dir = SKILLS_DIR / skill_name

skill_dir.mkdir(parents=True, exist_ok=True)

skill_md = f"""---

name: {skill_name}

description: {description}

version: 1.0.0

origin: manual

triggers: {json.dumps(triggers)}

---

# {skill_name}

{description}

## Instructions

{instructions}

## Quality Metrics

- Applied Rate: 0% (new skill)

- Completion Rate: N/A

- Effective Rate: N/A

"""

skill_path = skill_dir / "SKILL.md"

skill_path.write_text(skill_md)

print(f"

Skill search becomes available after skills are evolved.")

print(" In production, run several tasks first to build up the skill database.")

await demo_skill_search()

def create_custom_skill(skill_name, description, instructions, triggers):

skill_dir = SKILLS_DIR / skill_name

skill_dir.mkdir(parents=True, exist_ok=True)

skill_md = f"""---

name: {skill_name}

description: {description}

version: 1.0.0

origin: manual

triggers: {json.dumps(triggers)}

---

# {skill_name}

{description}

## Instructions

{instructions}

## Quality Metrics

- Applied Rate: 0% (new skill)

- Completion Rate: N/A

- Effective Rate: N/A

"""

skill_path = skill_dir / "SKILL.md"

skill_path.write_text(skill_md)

print(f" Created skill: {skill_name}")

print(f" Path: {skill_path}")

return skill_path

create_custom_skill(

skill_name="data-validation-csv",

description="Validate CSV files for common issues before processing: check encoding, detect delimiter, handle missing values, verify column types.",

instructions="""When working with CSV data:

1. **Encoding Detection**: Try UTF-8 first, then fall back to latin-1, cp1252

2. **Delimiter Detection**: Use csv.Sniffer() to auto-detect delimiter

3. **Missing Values**: Count NaN/null per column, report percentage

4. **Type Inference**: Check if numeric columns are actually numeric

5. **Duplicate Check**: Identify duplicate rows

```python

import pandas as pd

import csv

import chardet

def validate_csv(filepath):

with open(filepath, 'rb') as f:

result = chardet.detect(f.read(10000))

encoding = result['encoding']

df = pd.read_csv(filepath, encoding=encoding)

report = {

'rows': len(df),

'columns': list(df.columns),

'missing': df.isnull().sum().to_dict(),

'duplicates': df.duplicated().sum(),

'dtypes': df.dtypes.astype(str).to_dict()

}

return report

```""",

triggers=["csv", "data validation", "data quality", "pandas"]

)

print()

create_custom_skill(

skill_name="report-gen-fallback",

description="Generate reports with multiple fallback strategies: try reportlab PDF first, fall back to HTML, then plain text.",

instructions="""When generating reports:

1. **Try reportlab PDF** first for professional output

2. **Fall back to HTML** if reportlab fails (common in sandboxed envs)

3. **Final fallback: plain text** with formatted tables

Always verify the output file exists and has non-zero size after generation.

```python

def generate_report(data, output_path):

try:

from reportlab.lib.pagesizes import letter

from reportlab.platypus import SimpleDocTemplate

return output_path

except ImportError:

pass

try:

html_path = output_path.replace('.pdf', '.html')

return html_path

except Exception:

pass

txt_path = output_path.replace('.pdf', '.txt')

return txt_path

```""",

triggers=["report", "PDF", "document generation", "reportlab"]

)

print()

create_custom_skill(

skill_name="execution-recovery",

description="Multi-layer execution recovery: handle sandbox failures, shell errors, and file write issues with progressive fallbacks.",

instructions="""When code execution fails:

1. **Capture the full error** including traceback

2. **Identify the failure type**: ImportError, PermissionError, TimeoutError, etc.

3. **Apply targeted fix**:

- ImportError → pip install the missing package

- PermissionError → change output directory to /tmp

- TimeoutError → reduce data size or add chunking

- MemoryError → process in batches

4. **Retry with fix applied**

5. **Log the fix** for future skill evolution

This skill was captured from 28 real execution failures in the GDPVal benchmark.""",

triggers=["error", "failure", "recovery", "fallback", "retry"]

)

print("n" + "="*60)

print("

Created skill: {skill_name}")

print(f" Path: {skill_path}")

return skill_path

create_custom_skill(

skill_name="data-validation-csv",

description="Validate CSV files for common issues before processing: check encoding, detect delimiter, handle missing values, verify column types.",

instructions="""When working with CSV data:

1. **Encoding Detection**: Try UTF-8 first, then fall back to latin-1, cp1252

2. **Delimiter Detection**: Use csv.Sniffer() to auto-detect delimiter

3. **Missing Values**: Count NaN/null per column, report percentage

4. **Type Inference**: Check if numeric columns are actually numeric

5. **Duplicate Check**: Identify duplicate rows

```python

import pandas as pd

import csv

import chardet

def validate_csv(filepath):

with open(filepath, 'rb') as f:

result = chardet.detect(f.read(10000))

encoding = result['encoding']

df = pd.read_csv(filepath, encoding=encoding)

report = {

'rows': len(df),

'columns': list(df.columns),

'missing': df.isnull().sum().to_dict(),

'duplicates': df.duplicated().sum(),

'dtypes': df.dtypes.astype(str).to_dict()

}

return report

```""",

triggers=["csv", "data validation", "data quality", "pandas"]

)

print()

create_custom_skill(

skill_name="report-gen-fallback",

description="Generate reports with multiple fallback strategies: try reportlab PDF first, fall back to HTML, then plain text.",

instructions="""When generating reports:

1. **Try reportlab PDF** first for professional output

2. **Fall back to HTML** if reportlab fails (common in sandboxed envs)

3. **Final fallback: plain text** with formatted tables

Always verify the output file exists and has non-zero size after generation.

```python

def generate_report(data, output_path):

try:

from reportlab.lib.pagesizes import letter

from reportlab.platypus import SimpleDocTemplate

return output_path

except ImportError:

pass

try:

html_path = output_path.replace('.pdf', '.html')

return html_path

except Exception:

pass

txt_path = output_path.replace('.pdf', '.txt')

return txt_path

```""",

triggers=["report", "PDF", "document generation", "reportlab"]

)

print()

create_custom_skill(

skill_name="execution-recovery",

description="Multi-layer execution recovery: handle sandbox failures, shell errors, and file write issues with progressive fallbacks.",

instructions="""When code execution fails:

1. **Capture the full error** including traceback

2. **Identify the failure type**: ImportError, PermissionError, TimeoutError, etc.

3. **Apply targeted fix**:

- ImportError → pip install the missing package

- PermissionError → change output directory to /tmp

- TimeoutError → reduce data size or add chunking

- MemoryError → process in batches

4. **Retry with fix applied**

5. **Log the fix** for future skill evolution

This skill was captured from 28 real execution failures in the GDPVal benchmark.""",

triggers=["error", "failure", "recovery", "fallback", "retry"]

)

print("n" + "="*60)

print(" All registered skills:")

print("="*60)

for skill_dir in sorted(SKILLS_DIR.iterdir()):

if skill_dir.is_dir():

skill_md = skill_dir / "SKILL.md"

if skill_md.exists():

content = skill_md.read_text()

for content line.split('n'):

if line.startswith('name:'):

name = line.split(':', 1)[1].strip()

print(f"

All registered skills:")

print("="*60)

for skill_dir in sorted(SKILLS_DIR.iterdir()):

if skill_dir.is_dir():

skill_md = skill_dir / "SKILL.md"

if skill_md.exists():

content = skill_md.read_text()

for content line.split('n'):

if line.startswith('name:'):

name = line.split(':', 1)[1].strip()

print(f"  {name}")

break

{name}")

breakWe run a second task deliberately similar to the cold-start task, allowing OpenSpace to discover and reuse previously evolved skills, and we compare execution time and token usage with the first run. We demonstrate the hybrid skill search system that combines BM25 and embedding-based ranking to find the most relevant skills for any given task description. We then manually create three production-quality skills: data validation, report generation with fallbacks, and execution recovery, following the SKILL.md convention, showing how we seed the system with domain knowledge before the evolution engine takes over.

async def demo_cloud_community():

print("="*60)

print(" CLOUD COMMUNITY INTEGRATION")

print("="*60)

cloud_key = os.environ.get("OPENSPACE_API_KEY", "")

if not cloud_key:

print("n

CLOUD COMMUNITY INTEGRATION")

print("="*60)

cloud_key = os.environ.get("OPENSPACE_API_KEY", "")

if not cloud_key:

print("n Cloud API key not set. Showing what's possible:")

print("n With a cloud key, you can:")

print(" •

Cloud API key not set. Showing what's possible:")

print("n With a cloud key, you can:")

print(" •  Search community skills by keyword or task description")

print(" •

Search community skills by keyword or task description")

print(" •  Download evolved skills from other agents")

print(" •

Download evolved skills from other agents")

print(" •  Upload your evolved skills to share")

print(" •

Upload your evolved skills to share")

print(" •  Create teams with shared skill repositories")

print(" •

Create teams with shared skill repositories")

print(" •  Track skill lineage and evolution history")

print("n Get a free key at: https://open-space.cloud")

print("n CLI commands (outside Colab):")

print(" $ openspace-download-skill <skill_id>")

print(" $ openspace-upload-skill /path/to/skill/dir")

return

try:

from openspace.cloud.client import CloudClient

client = CloudClient(api_key=cloud_key)

search_queries = [

"data analysis CSV",

"PDF generation",

"web scraping",

]

for query in search_queries:

print(f"n

Track skill lineage and evolution history")

print("n Get a free key at: https://open-space.cloud")

print("n CLI commands (outside Colab):")

print(" $ openspace-download-skill <skill_id>")

print(" $ openspace-upload-skill /path/to/skill/dir")

return

try:

from openspace.cloud.client import CloudClient

client = CloudClient(api_key=cloud_key)

search_queries = [

"data analysis CSV",

"PDF generation",

"web scraping",

]

for query in search_queries:

print(f"n Searching cloud for: '{query}'")

results = await client.search(query)

if results:

for r in results[:3]:

print(f"

Searching cloud for: '{query}'")

results = await client.search(query)

if results:

for r in results[:3]:

print(f"  {r.get('name', 'unnamed')}")

print(f" Author: {r.get('author', 'unknown')}")

print(f" Downloads: {r.get('downloads', 0)}")

print(f" Version: {r.get('version', '1.0')}")

else:

print(" (no results)")

except ImportError:

print("n

{r.get('name', 'unnamed')}")

print(f" Author: {r.get('author', 'unknown')}")

print(f" Downloads: {r.get('downloads', 0)}")

print(f" Version: {r.get('version', '1.0')}")

else:

print(" (no results)")

except ImportError:

print("n Cloud client not available in this installation.")

print(" Install the full package for cloud features.")

except Exception as e:

print(f"n

Cloud client not available in this installation.")

print(" Install the full package for cloud features.")

except Exception as e:

print(f"n Cloud error: {e}")

await demo_cloud_community()

gdpval_metrics = {

"categories": [

{

"name": "Documents & Correspondence",

"tasks": 7,

"phase1_income": 71, "phase2_income": 74,

"token_reduction": 56,

"top_skill": "document-gen-fallback (13 versions)"

},

{

"name": "Compliance & Forms",

"tasks": 11,

"phase1_income": 51, "phase2_income": 70,

"token_reduction": 51,

"top_skill": "PDF checklist pipeline"

},

{

"name": "Media Production",

"tasks": 3,

"phase1_income": 53, "phase2_income": 58,

"token_reduction": 46,

"top_skill": "ffmpeg codec fallbacks"

},

{

"name": "Engineering",

"tasks": 4,

"phase1_income": 70, "phase2_income": 78,

"token_reduction": 43,

"top_skill": "multi-deliverable coordination"

},

{

"name": "Spreadsheets",

"tasks": 15,

"phase1_income": 63, "phase2_income": 70,

"token_reduction": 37,

"top_skill": "xlsx formula patterns"

},

{

"name": "Strategy & Analysis",

"tasks": 10,

"phase1_income": 88, "phase2_income": 89,

"token_reduction": 32,

"top_skill": "document structure reuse"

}

],

"overall": {

"total_skills_evolved": 165,

"income_multiplier": "4.2x vs ClawWork baseline",

"value_capture": "72.8% ($11,484 / $15,764)",

"avg_quality": "70.8%",

"avg_token_reduction": "45.9%"

},

"skill_taxonomy": {

"File Format I/O": 44,

"Execution Recovery": 29,

"Document Generation": 26,

"Quality Assurance": 23,

"Task Orchestration": 17,

"Domain Workflow": 13,

"Web & Research": 11

}

}

print("="*60)

print("

Cloud error: {e}")

await demo_cloud_community()

gdpval_metrics = {

"categories": [

{

"name": "Documents & Correspondence",

"tasks": 7,

"phase1_income": 71, "phase2_income": 74,

"token_reduction": 56,

"top_skill": "document-gen-fallback (13 versions)"

},

{

"name": "Compliance & Forms",

"tasks": 11,

"phase1_income": 51, "phase2_income": 70,

"token_reduction": 51,

"top_skill": "PDF checklist pipeline"

},

{

"name": "Media Production",

"tasks": 3,

"phase1_income": 53, "phase2_income": 58,

"token_reduction": 46,

"top_skill": "ffmpeg codec fallbacks"

},

{

"name": "Engineering",

"tasks": 4,

"phase1_income": 70, "phase2_income": 78,

"token_reduction": 43,

"top_skill": "multi-deliverable coordination"

},

{

"name": "Spreadsheets",

"tasks": 15,

"phase1_income": 63, "phase2_income": 70,

"token_reduction": 37,

"top_skill": "xlsx formula patterns"

},

{

"name": "Strategy & Analysis",

"tasks": 10,

"phase1_income": 88, "phase2_income": 89,

"token_reduction": 32,

"top_skill": "document structure reuse"

}

],

"overall": {

"total_skills_evolved": 165,

"income_multiplier": "4.2x vs ClawWork baseline",

"value_capture": "72.8% ($11,484 / $15,764)",

"avg_quality": "70.8%",

"avg_token_reduction": "45.9%"

},

"skill_taxonomy": {

"File Format I/O": 44,

"Execution Recovery": 29,

"Document Generation": 26,

"Quality Assurance": 23,

"Task Orchestration": 17,

"Domain Workflow": 13,

"Web & Research": 11

}

}

print("="*60)

print(" GDPVal BENCHMARK RESULTS (OpenSpace vs Baseline)")

print("="*60)

print(f"n

GDPVal BENCHMARK RESULTS (OpenSpace vs Baseline)")

print("="*60)

print(f"n Overall Performance:")

for k, v in gdpval_metrics["overall"].items():

label = k.replace('_', ' ').title()

print(f" {label}: {v}")

print(f"n

Overall Performance:")

for k, v in gdpval_metrics["overall"].items():

label = k.replace('_', ' ').title()

print(f" {label}: {v}")

print(f"n Performance by Category:")

print(f" {'Category':<28} {'P1→P2 Income':<16} {'Token ↓':<10} {'Tasks'}")

print(f" {'-'*70}")

for cat in gdpval_metrics["categories"]:

income_str = f"{cat['phase1_income']}% → {cat['phase2_income']}%"

token_str = f"-{cat['token_reduction']}%"

print(f" {cat['name']:<28} {income_str:<16} {token_str:<10} {cat['tasks']}")

print(f"n

Performance by Category:")

print(f" {'Category':<28} {'P1→P2 Income':<16} {'Token ↓':<10} {'Tasks'}")

print(f" {'-'*70}")

for cat in gdpval_metrics["categories"]:

income_str = f"{cat['phase1_income']}% → {cat['phase2_income']}%"

token_str = f"-{cat['token_reduction']}%"

print(f" {cat['name']:<28} {income_str:<16} {token_str:<10} {cat['tasks']}")

print(f"n Evolved Skill Taxonomy ({sum(gdpval_metrics['skill_taxonomy'].values())} total):")

for purpose, count in gdpval_metrics["skill_taxonomy"].items():

bar = "█" * (count // 2)

print(f" {purpose:<24} {count:>3} {bar}")

Evolved Skill Taxonomy ({sum(gdpval_metrics['skill_taxonomy'].values())} total):")

for purpose, count in gdpval_metrics["skill_taxonomy"].items():

bar = "█" * (count // 2)

print(f" {purpose:<24} {count:>3} {bar}")We explore cloud community integration at openspace.cloud, demonstrating how agents search for, download, and upload evolved skills to share collective intelligence across teams. We display the full GDPVal benchmark results across six professional task categories, showing exactly how OpenSpace achieves its 4.2x income improvement and 46% average token reduction compared to the ClawWork baseline. We visualize the taxonomy of all 165 skills that were autonomously evolved during the benchmark, revealing that the majority focus on execution recovery and file format handling rather than domain-specific knowledge.

PIPELINE_TASKS = [

{

"name": "CSV Analyzer",

"query": (

"'employee_id, name, department, salary, start_date', "

"calculates average salary by department, finds employees "

"with tenure > 5 years, and saves results to a new CSV."

),

"category": "Spreadsheets"

},

{

"name": "Text Report Generator",

"query": (

"Create a Python script that generates a formatted text "

"report from a dictionary of financial data including "

"revenue, expenses, and profit margins. Include headers, "

"separators, and a summary section with key insights."

),

"category": "Documents"

},

{

"name": "Data Quality Checker",

"query": (

"Write a Python data quality checking tool that validates a "

"pandas DataFrame: check for nulls, duplicates, outliers "

"(using IQR method), type mismatches, and generates a "

"data quality score from 0-100 with detailed breakdown."

),

"category": "Quality Assurance"

},

]

async def run_pipeline():

print("="*60)

print(" MULTI-TASK PIPELINE WITH EVOLUTION TRACKING")

print("="*60)

results = []

total_skills_before = 0

for i, task_info in enumerate(PIPELINE_TASKS, 1):

print(f"n{'─'*60}")

print(f"

MULTI-TASK PIPELINE WITH EVOLUTION TRACKING")

print("="*60)

results = []

total_skills_before = 0

for i, task_info in enumerate(PIPELINE_TASKS, 1):

print(f"n{'─'*60}")

print(f" Task {i}/{len(PIPELINE_TASKS)}: {task_info['name']}")

print(f" Category: {task_info['category']}")

print(f"{'─'*60}")

start_time = time.time()

try:

from openspace import OpenSpace

async with OpenSpace() as cs:

result = await cs.execute(task_info["query"])

elapsed = time.time() - start_time

evolved = result.get("evolved_skills", [])

reused = result.get("reused_skills", [])

task_result = {

"name": task_info["name"],

"time": elapsed,

"evolved_count": len(evolved),

"reused_count": len(reused),

"success": True

}

results.append(task_result)

print(f"

Task {i}/{len(PIPELINE_TASKS)}: {task_info['name']}")

print(f" Category: {task_info['category']}")

print(f"{'─'*60}")

start_time = time.time()

try:

from openspace import OpenSpace

async with OpenSpace() as cs:

result = await cs.execute(task_info["query"])

elapsed = time.time() - start_time

evolved = result.get("evolved_skills", [])

reused = result.get("reused_skills", [])

task_result = {

"name": task_info["name"],

"time": elapsed,

"evolved_count": len(evolved),

"reused_count": len(reused),

"success": True

}

results.append(task_result)

print(f"  Completed in {elapsed:.1f}s")

print(f"

Completed in {elapsed:.1f}s")

print(f"  Skills evolved: {len(evolved)}")

print(f"

Skills evolved: {len(evolved)}")

print(f"  Skills reused: {len(reused)}")

except Exception as e:

elapsed = time.time() - start_time

results.append({

"name": task_info["name"],

"time": elapsed,

"evolved_count": 0,

"reused_count": 0,

"success": False,

"error": str(e)

})

print(f"

Skills reused: {len(reused)}")

except Exception as e:

elapsed = time.time() - start_time

results.append({

"name": task_info["name"],

"time": elapsed,

"evolved_count": 0,

"reused_count": 0,

"success": False,

"error": str(e)

})

print(f"  Error: {e}")

print(f"n{'═'*60}")

print("

Error: {e}")

print(f"n{'═'*60}")

print(" PIPELINE SUMMARY")

print(f"{'═'*60}")

total_time = sum(r["time"] for r in results)

total_evolved = sum(r["evolved_count"] for r in results)

total_reused = sum(r["reused_count"] for r in results)

successes = sum(1 for r in results if r["success"])

print(f" Tasks completed: {successes}/{len(results)}")

print(f" Total time: {total_time:.1f}s")

print(f" Total skills evolved: {total_evolved}")

print(f" Total skills reused: {total_reused}")

if total_reused > 0:

print(f"n

PIPELINE SUMMARY")

print(f"{'═'*60}")

total_time = sum(r["time"] for r in results)

total_evolved = sum(r["evolved_count"] for r in results)

total_reused = sum(r["reused_count"] for r in results)

successes = sum(1 for r in results if r["success"])

print(f" Tasks completed: {successes}/{len(results)}")

print(f" Total time: {total_time:.1f}s")

print(f" Total skills evolved: {total_evolved}")

print(f" Total skills reused: {total_reused}")

if total_reused > 0:

print(f"n  Skill reuse increased over the pipeline,")

print(f" demonstrating the self-evolution loop!")

return results

pipeline_results = await run_pipeline()

def analyze_evolution_with_openai():

print("="*60)

print("

Skill reuse increased over the pipeline,")

print(f" demonstrating the self-evolution loop!")

return results

pipeline_results = await run_pipeline()

def analyze_evolution_with_openai():

print("="*60)

print(" AI-POWERED EVOLUTION ANALYSIS")

print("="*60)

skill_contents = {}

for skill_dir in SKILLS_DIR.iterdir():

if skill_dir.is_dir():

skill_md = skill_dir / "SKILL.md"

if skill_md.exists():

skill_contents[skill_dir.name] = skill_md.read_text()

if not skill_contents:

print("n

AI-POWERED EVOLUTION ANALYSIS")

print("="*60)

skill_contents = {}

for skill_dir in SKILLS_DIR.iterdir():

if skill_dir.is_dir():

skill_md = skill_dir / "SKILL.md"

if skill_md.exists():

skill_contents[skill_dir.name] = skill_md.read_text()

if not skill_contents:

print("n No skills to analyze yet.")

return

skills_summary = "nn".join([

f"### Skill: {name}n{content[:500]}"

for name, content in skill_contents.items()

])

from openai import OpenAI

client = OpenAI(api_key=os.environ["OPENAI_API_KEY"])

response = client.chat.completions.create(

model="gpt-4o-mini",

messages=[

{

"role": "system",

"content": (

"You are a skill evolution analyst for OpenSpace. "

"Analyze the given skills and provide insights on: "

"1) Skill coverage gaps, 2) Potential evolution paths, "

"3) Skill interaction opportunities, 4) Recommended "

"new skills to create. Be concise and actionable."

)

},

{

"role": "user",

"content": f"Analyze these OpenSpace skills:nn{skills_summary}"

}

],

max_tokens=800

)

analysis = response.choices[0].message.content

print(f"n{analysis}")

usage = response.usage

print(f"n

No skills to analyze yet.")

return

skills_summary = "nn".join([

f"### Skill: {name}n{content[:500]}"

for name, content in skill_contents.items()

])

from openai import OpenAI

client = OpenAI(api_key=os.environ["OPENAI_API_KEY"])

response = client.chat.completions.create(

model="gpt-4o-mini",

messages=[

{

"role": "system",

"content": (

"You are a skill evolution analyst for OpenSpace. "

"Analyze the given skills and provide insights on: "

"1) Skill coverage gaps, 2) Potential evolution paths, "

"3) Skill interaction opportunities, 4) Recommended "

"new skills to create. Be concise and actionable."

)

},

{

"role": "user",

"content": f"Analyze these OpenSpace skills:nn{skills_summary}"

}

],

max_tokens=800

)

analysis = response.choices[0].message.content

print(f"n{analysis}")

usage = response.usage

print(f"n Analysis token cost:")

print(f" Input: {usage.prompt_tokens} tokens")

print(f" Output: {usage.completion_tokens} tokens")

print(f" Total: {usage.total_tokens} tokens")

analyze_evolution_with_openai()

def demonstrate_token_savings():

print("="*60)

print("

Analysis token cost:")

print(f" Input: {usage.prompt_tokens} tokens")

print(f" Output: {usage.completion_tokens} tokens")

print(f" Total: {usage.total_tokens} tokens")

analyze_evolution_with_openai()

def demonstrate_token_savings():

print("="*60)

print(" TOKEN SAVINGS DEMONSTRATION")

print("="*60)

from openai import OpenAI

client = OpenAI(api_key=os.environ["OPENAI_API_KEY"])

task = (

"computes monthly revenue, and generates a text report."

)

cold_messages = [

{"role": "system", "content": "You are a coding assistant. Write complete, working Python code."},

{"role": "user", "content": task}

]

cold_response = client.chat.completions.create(

model="gpt-4o-mini", messages=cold_messages, max_tokens=1500

)

cold_tokens = cold_response.usage.total_tokens

skill_context = """

## Available Skill: csv-data-analysis (v3, evolved from 28 executions)

Pattern: Use pandas read_csv with encoding detection. Group by

month using pd.Grouper(key='date', freq='ME'). Sum revenue column.

Use tabulate for text report formatting. Fallback: plain text with

f-strings if tabulate unavailable.

Template:

```python

import pandas as pd

df = pd.read_csv(filepath)

df['date'] = pd.to_datetime(df['date'])

monthly = df.groupby(pd.Grouper(key='date', freq='ME'))['revenue'].sum()

```

"""

warm_messages = [

{

"role": "system",

"content": (

"You are a coding assistant with access to pre-evolved skills. "

"Reuse the provided skill patterns to write efficient code. "

"Only add what's missing — don't re-derive what the skill provides.nn"

f"{skill_context}"

)

},

{"role": "user", "content": task}

]

warm_response = client.chat.completions.create(

model="gpt-4o-mini", messages=warm_messages, max_tokens=1000

)

warm_tokens = warm_response.usage.total_tokens

savings_pct = ((cold_tokens - warm_tokens) / cold_tokens) * 100

print(f"n

TOKEN SAVINGS DEMONSTRATION")

print("="*60)

from openai import OpenAI

client = OpenAI(api_key=os.environ["OPENAI_API_KEY"])

task = (

"computes monthly revenue, and generates a text report."

)

cold_messages = [

{"role": "system", "content": "You are a coding assistant. Write complete, working Python code."},

{"role": "user", "content": task}

]

cold_response = client.chat.completions.create(

model="gpt-4o-mini", messages=cold_messages, max_tokens=1500

)

cold_tokens = cold_response.usage.total_tokens

skill_context = """

## Available Skill: csv-data-analysis (v3, evolved from 28 executions)

Pattern: Use pandas read_csv with encoding detection. Group by

month using pd.Grouper(key='date', freq='ME'). Sum revenue column.

Use tabulate for text report formatting. Fallback: plain text with

f-strings if tabulate unavailable.

Template:

```python

import pandas as pd

df = pd.read_csv(filepath)

df['date'] = pd.to_datetime(df['date'])

monthly = df.groupby(pd.Grouper(key='date', freq='ME'))['revenue'].sum()

```

"""

warm_messages = [

{

"role": "system",

"content": (

"You are a coding assistant with access to pre-evolved skills. "

"Reuse the provided skill patterns to write efficient code. "

"Only add what's missing — don't re-derive what the skill provides.nn"

f"{skill_context}"

)

},

{"role": "user", "content": task}

]

warm_response = client.chat.completions.create(

model="gpt-4o-mini", messages=warm_messages, max_tokens=1000

)

warm_tokens = warm_response.usage.total_tokens

savings_pct = ((cold_tokens - warm_tokens) / cold_tokens) * 100

print(f"n Cold Start (no skills): {cold_tokens:>6} tokens")

print(f"

Cold Start (no skills): {cold_tokens:>6} tokens")

print(f" Warm Start (with skill): {warm_tokens:>6} tokens")

print(f"

Warm Start (with skill): {warm_tokens:>6} tokens")

print(f" Savings: {cold_tokens - warm_tokens:>6} tokens ({savings_pct:.1f}%)")

print(f"n

Savings: {cold_tokens - warm_tokens:>6} tokens ({savings_pct:.1f}%)")

print(f"n Cold response length: {len(cold_response.choices[0].message.content)} chars")

print(f"

Cold response length: {len(cold_response.choices[0].message.content)} chars")

print(f" Warm response length: {len(warm_response.choices[0].message.content)} chars")

print(f"n

Warm response length: {len(warm_response.choices[0].message.content)} chars")

print(f"n In OpenSpace's GDPVal benchmark, skill reuse achieved")

print(f" an average 45.9% token reduction across 50 professional tasks.")

print(f" The warm start also produces higher quality output because")

print(f" skills encode battle-tested patterns from real executions.")

demonstrate_token_savings()

print("n

In OpenSpace's GDPVal benchmark, skill reuse achieved")

print(f" an average 45.9% token reduction across 50 professional tasks.")

print(f" The warm start also produces higher quality output because")

print(f" skills encode battle-tested patterns from real executions.")

demonstrate_token_savings()

print("n Tutorial complete! Star the repo if this helped:")

print("

Tutorial complete! Star the repo if this helped:")

print("  https://github.com/HKUDS/OpenSpace")

https://github.com/HKUDS/OpenSpace")We run a three-task pipeline sequentially: a CSV analyzer, a text report generator, and a data quality checker, tracking how skills accumulate and reuse increases with each successive task, mirroring the GDPVal benchmark’s Phase 1 design. We use the OpenAI API to perform an AI-powered analysis of our evolved skill library, identifying coverage gaps, potential evolution paths, and recommended new skills to create. We close with a direct cold-versus-warm token comparison that measures real savings by sending the same task with and without skill context, demonstrating concretely how pre-evolved patterns reduce both token cost and response length.

In conclusion, we saw firsthand how OpenSpace transforms the way AI agents operate, shifting them from stateless tools that reason from scratch with every task into self-improving systems that accumulate expertise with each task. We observed the cold-to-warm transition, in which skills learned from earlier executions reduce both cost and latency in subsequent runs. We built and registered our own custom skills to seed domain knowledge, and we use OpenAI’s API to analyze evolution patterns across our skill library. The key insight we take away is that OpenSpace treats skills not as static configuration files but as living entities that auto-repair when tools break, auto-improve when better patterns emerge, and auto-propagate when connected to the cloud community. Whether we integrate OpenSpace into an existing agent like Claude Code or Codex via its MCP server, or use it standalone as an AI co-worker, we now have the foundation to build agents that genuinely get better and cheaper over time.

Check out the Full Notebook here. Also, feel free to follow us on Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

The post A Coding Implementation to Design Self-Evolving Skill Engine with OpenSpace for Skill Learning, Token Efficiency, and Collective Intelligence appeared first on MarkTechPost.